Post-Quantum Cryptography Explained: Why It Matters for the CAS-005 Exam and Beyond

Post-quantum cryptography (PQC) is no longer a niche research topic. With NIST publishing the first federal standards for quantum-resistant algorithms in 2024, architects and security engineers need to start planning real migrations now [1]. For the CompTIA CASP+ (CAS-005) exam, you’re expected to understand crypto design, cryptographic agility, and long-term data protection—exactly where PQC fits [2].

This tutorial breaks down the quantum threat, what NIST’s PQC standards actually are, how they intersect with CAS-005 objectives, and how to sketch a realistic migration roadmap that would make sense both in an exam PBQ and in your environment.

1. Why Quantum Computing Breaks Today’s Cryptography

Most of the internet’s security depends on public-key cryptography: algorithms like RSA, Diffie–Hellman, and elliptic curve cryptography (ECC). Their security relies on the fact that classical computers find certain math problems—like factoring large integers or solving discrete logs—computationally infeasible.

A sufficiently powerful quantum computer changes that. Shor’s algorithm can factor large integers and compute discrete logarithms in polynomial time, which would break RSA, DH, and ECC once scaled quantum machines exist [1]. Symmetric algorithms (e.g., AES) and hash functions (e.g., SHA-2) are less affected; Grover’s algorithm offers a quadratic speedup, which can be mitigated by doubling key sizes (e.g., AES-128 → AES-256) [1].

For CAS-005, the key takeaway is conceptual: public-key primitives based on factoring and discrete logs are vulnerable in a post-quantum world, while symmetric primitives survive with larger keys. That informs which controls you must replace versus simply strengthen.

2. The “Harvest Now, Decrypt Later” Problem

Many organizations assume, “We’ll migrate to PQC when quantum computers are actually here.” That’s dangerous because of harvest now, decrypt later (HNDL) attacks. Adversaries can:

- Record encrypted traffic today (e.g., TLS sessions, VPN tunnels)

- Store it for years or decades

- Decrypt it in the future once quantum capabilities mature

Any data that must remain confidential for a long time—think health records, financial histories, intellectual property, long-lived certificates, and government data—may be exposed retroactively if you wait too long to adopt post-quantum cryptography [1][3].

CAS-005 scenarios often ask you to prioritize protections based on data sensitivity and lifespan. PQC is a classic example: low-sensitivity, short-lived data may not justify aggressive migration; highly sensitive, long-lived data likely does.

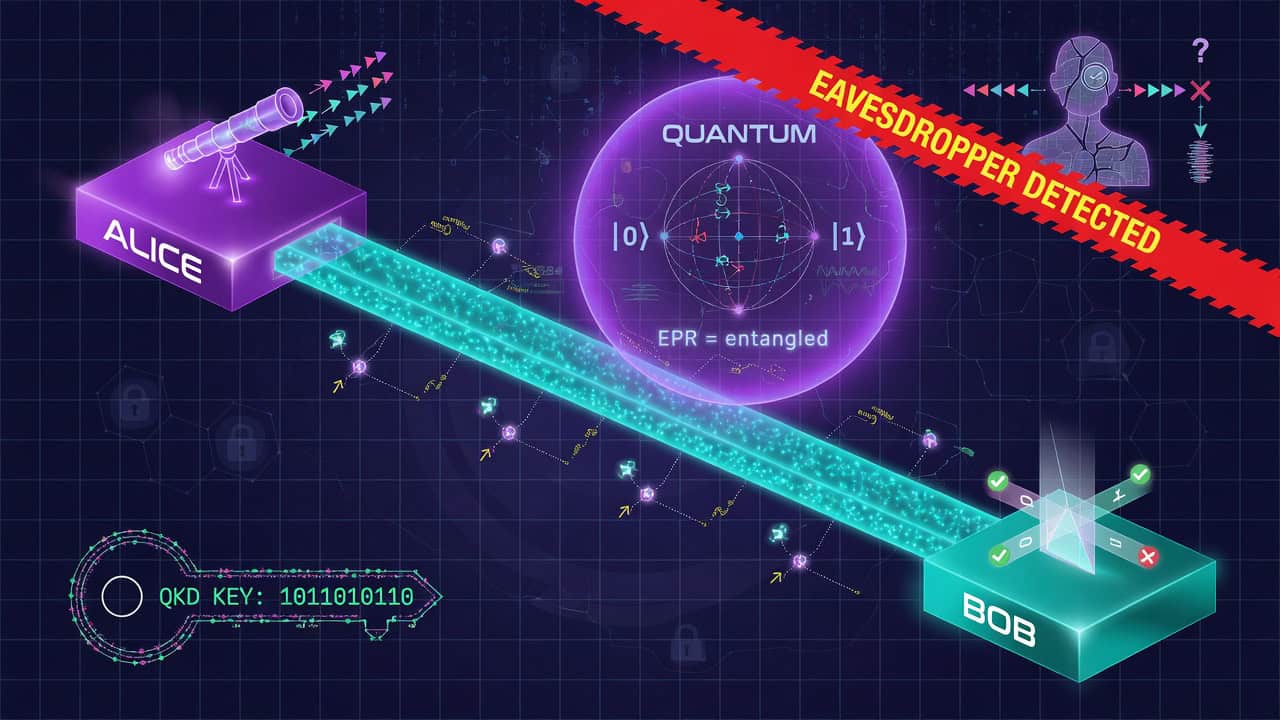

3. What Is Post-Quantum Cryptography (PQC)?

Post-quantum cryptography refers to cryptographic algorithms designed to be secure against both classical and quantum adversaries, and that are implementable on classical computers [1]. These are not quantum-key distribution or physics-based schemes; they are math-based algorithms intended to replace RSA and ECC in software and hardware we already use.

NIST ran a multi-year Post-Quantum Cryptography Standardization project to evaluate candidate algorithms. In August 2024, NIST approved three Federal Information Processing Standards (FIPS) defining the first PQC standards for general use [1]:

- CRYSTALS-Kyber (FIPS 203): Key-encapsulation mechanism (KEM) for key establishment

- CRYSTALS-Dilithium (FIPS 204): Digital signature scheme

- SPHINCS+ (FIPS 205): Stateless hash-based signature scheme

NIST also selected FALCON, another lattice-based signature scheme, for standardization; its FIPS publication will follow separately [1]. In parallel, stateful hash-based signature schemes like XMSS and LMS are approved in NIST SP 800‑208 for certain use cases (e.g., code signing) [4].

For CAS-005, you don’t need deep lattice theory, but you should recognize the names, categories, and basic trade-offs (key sizes, performance, implementation complexity).

4. NIST’s PQC Standards: What You Actually Need to Know

At a CAS-005 level, focus on roles and design implications of the main NIST PQC algorithms rather than the math proofs.

4.1 CRYSTALS-Kyber (FIPS 203) – Key Establishment KEM

CRYSTALS-Kyber is a lattice-based key-encapsulation mechanism used to establish shared secrets over an untrusted channel, similar to how ECDHE is used in TLS today [1]. It’s designed to be efficient and relatively compact, making it suitable for TLS, VPNs, and other protocols that need forward secrecy.

CAS-005 exam angle:

- Recognize Kyber as a post-quantum replacement for Diffie–Hellman/ECDH-style key exchange [1].

- Be able to justify its use in future TLS handshakes or IPsec-like protocols when asked to “design quantum-resistant transport security.”

4.2 CRYSTALS-Dilithium (FIPS 204) – Lattice-Based Signatures

CRYSTALS-Dilithium is a lattice-based digital signature scheme. It offers strong security with relatively efficient signing and verification, but with larger key and signature sizes than classical schemes like ECDSA [1].

Use cases include:

- Certificate authorities issuing PQC-enabled certificates

- Signing software artifacts, firmware, and configuration updates

- Authentication protocols where signatures prove identity

CAS-005 exam angle: in a design scenario, you may need to weigh signature size, performance, and implementation maturity when choosing between classical, PQC, and hybrid signature schemes.

4.3 SPHINCS+ (FIPS 205) – Stateless Hash-Based Signatures

SPHINCS+ is a stateless hash-based signature scheme. It’s conservative and based only on hash functions, with very strong security assurances, but signatures and keys are much larger and operations slower compared to many lattice-based schemes [1].

It’s attractive for high-assurance, low-volume signing (e.g., root certificates) where size is less important than long-term security.

4.4 Stateful Hash-Based Signatures (XMSS, LMS) – NIST SP 800-208

NIST SP 800‑208 approves two stateful hash-based signature schemes, XMSS and LMS, as additional PQC options [4]. These require careful state management (you must not reuse keys for more signatures than allowed), which complicates operational deployment.

From a CAS-005 perspective, these are most likely to appear in a scenario about code signing or firmware signing, where you need to choose between stateful and stateless schemes and reason about operational risk [4].

5. Post-Quantum Cryptography in CAS-005 Objectives

The official CASP+ CAS-005 exam objectives include post-quantum cryptography, with expectations around comparing PQC to classical schemes and understanding its impact on architecture and risk [2]. PQC-related knowledge intersects several CAS-005 domains:

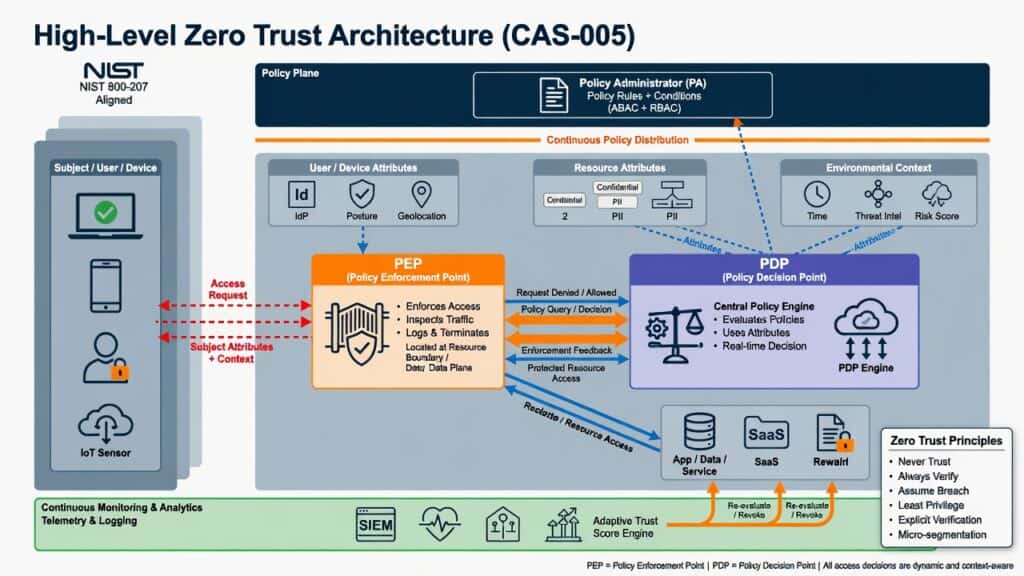

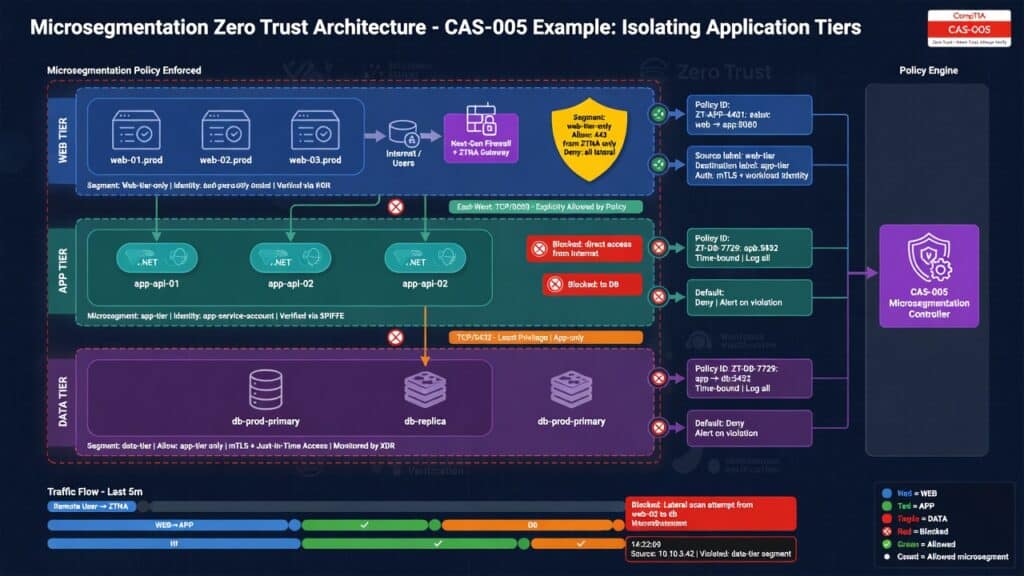

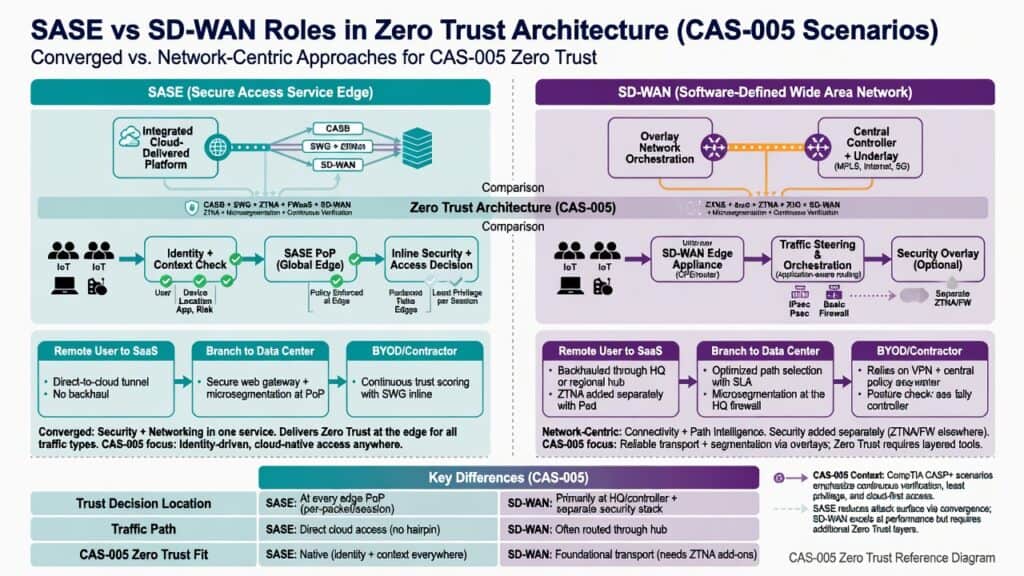

- Enterprise Security Architecture: Designing crypto-agile architectures, key management, and secure communication channels [2][3].

- Enterprise Security Operations: Managing certificate lifecycles, monitoring for weak/legacy crypto, enforcing crypto policies via configuration management [2][3].

- Governance, Risk, and Compliance: Evaluating long-term confidentiality risks and planning migration strategies to meet regulatory and policy expectations [2][3].

On the exam, expect PQC to appear in scenario-based questions and PBQs that test your ability to:

- Identify where classical public-key algorithms create quantum risk

- Recommend PQC or hybrid approaches that maintain interoperability

- Prioritize which systems and data should migrate first

6. Design Principles for a Post-Quantum World

Even before you roll out Kyber and Dilithium in production, you can design with post-quantum cryptography in mind. NIST and industry guidance emphasize several architectural principles [1][3][4]:

- Crypto agility: Architect systems so you can swap algorithms and key sizes without redesigning protocols or applications.

- Defense in depth: Combine PQC with strong symmetric crypto, access control, and monitoring; don’t rely on one layer.

- Prioritization by data value and lifetime: Migrate systems that handle high-sensitivity, long-lived data first [3].

- Standards alignment: Prefer algorithms and parameter sets standardized by NIST or recognized standards bodies for interoperability and assurance [1][4].

These principles map cleanly to NIST’s broader cybersecurity guidance around risk-based protection and continuous improvement, which CAS-005 expects you to apply across architectures [3].

7. Comparing Classical vs Post-Quantum Algorithms

For CAS-005 design questions, you should be able to compare classical and post-quantum options at a high level.

- Security assumptions

- Classical public-key: factoring, discrete logarithms (broken by Shor’s algorithm) [1].

- PQC (lattice-based): hardness of lattice problems (e.g., Learning With Errors). Not known to be broken by quantum algorithms [1].

- PQC (hash-based): security of underlying hash functions (e.g., SHA-2/SHA-3) [4].

- Key and signature sizes

- PQC keys and signatures are often much larger than RSA/ECC, which affects bandwidth and storage.

- Hash-based signatures like SPHINCS+ can be especially large, but are very conservative [1][4].

- Performance and resources

- PQC operations may be faster or slower than classical ones depending on algorithm and platform; you must consider CPU, memory, and latency.

- Embedded and IoT devices may struggle with some PQC schemes.

- Maturity and ecosystem

- RSA/ECC have decades of deployment and optimization.

- PQC standards and implementations are newer; maturity, side-channel resistance, and library support must be evaluated [1][4].

In an exam scenario, when asked to “recommend a future-proof cryptographic design,” you should articulate these trade-offs and justify a phased or hybrid approach.

8. Hybrid Cryptography: Bridging Today and Tomorrow

Most organizations cannot rip-and-replace RSA and ECC overnight. The practical near-term approach is hybrid cryptography:

- Hybrid key establishment: Combine a classical key exchange (e.g., ECDHE) with a PQC KEM (e.g., Kyber) in one handshake, deriving session keys from both. If either algorithm remains secure, the session is protected [1].

- Hybrid signatures: Use both a classical signature (e.g., ECDSA) and a PQC signature (e.g., Dilithium) on the same object (certificate, firmware). Verifiers can accept either or both, easing migration.

This addresses interoperability—legacy systems validate classical algorithms, while PQC-aware systems validate the quantum-resistant component. For CAS-005, hybrid designs are strong answers when you must support existing clients while mitigating future quantum risk.

9. A Practical PQC Migration Roadmap (CAS-005-Style)

In the real world and on CAS-005 PBQs, you’ll be asked to prioritize and plan. Here’s a high-level, exam-ready post-quantum cryptography migration roadmap:

9.1 Step 1 – Inventory Cryptographic Dependencies

Start with a crypto inventory across your environment [3]:

- Protocols: TLS (web, APIs), SSH, IPsec, VPNs, email (S/MIME, PGP), proprietary protocols

- Certificates and PKI: public CAs, private CAs, device certs, client certs

- Applications: custom code using crypto libraries, mobile apps, IoT devices

- Hardware: HSMs, smart cards, TPMs, secure elements

Identify where RSA, DH, and ECC are used, and note key sizes, certificate expiry dates, and protocol versions. This supports risk-based prioritization and aligns with NIST CSF’s emphasis on asset and dependency identification [3].

9.2 Step 2 – Classify Data by Sensitivity and Lifespan

Next, classify which data and transactions require confidentiality for 5, 10, 20+ years [3]:

- High sensitivity, long-lived: legal records, healthcare data, strategic IP, state secrets

- Medium sensitivity, medium-lived: most business data, logs, short-lived secrets

- Low sensitivity, short-lived: public content, ephemeral analytics

For CAS-005, be prepared to argue that systems handling high-sensitivity, long-lived data should be early PQC migration candidates because of harvest-now-decrypt-later risk [1][3].

9.3 Step 3 – Enable Crypto Agility

If your applications hard-code algorithms (e.g., “must use RSA-2048”), migration will be painful. Instead, refactor systems to support:

- Configurable cipher suites and signature algorithms

- Pluggable crypto modules or libraries

- Central policy control over allowed algorithms and key sizes

Crypto agility is a recurring theme in modern standards and frameworks, and it’s a design best practice CAS-005 expects you to recognize [2][3].

9.4 Step 4 – Pilot PQC and Hybrid Schemes

Before wide rollout, run pilots with PQC-enabled stacks:

- Test hybrid TLS (classical + Kyber) in a controlled segment

- Evaluate Dilithium or SPHINCS+ for signing internal services or firmware

- Measure performance, latency, and resource usage

- Assess operational impacts (e.g., certificate size, log volume)

In exam scenarios, recommending a pilot in a lower-risk environment before full deployment demonstrates good risk management and aligns with NIST’s cautious approach to adopting new cryptography [1][3][4].

9.5 Step 5 – Plan Long-Term Decommissioning of Vulnerable Crypto

Your roadmap should include a sequence and timeline for:

- Disallowing weak key sizes (e.g., RSA-1024, ECC below recommended curves)

- Phasing out RSA/ECC-only PKI components

- Standardizing on PQC or hybrid algorithms for new deployments

- Updating policies, standards, and baselines to reflect PQC requirements

For CAS-005, always tie roadmap decisions back to risk reduction, regulatory expectations, and operational feasibility [2][3].

10. PQC and Key Management: What Changes?

Post-quantum cryptography does not remove the need for strong key management; in many ways, it makes it more complex:

- Larger keys and certificates: HSMs, smart cards, and PKI components must handle larger key sizes and signatures without breaking storage or protocol limits [1].

- Stateful schemes: If you use stateful hash-based signatures (XMSS, LMS), you must carefully manage signing state to avoid reuse, which can break security [4].

- Mixed ecosystems: You may need to manage classical, PQC, and hybrid keys simultaneously during migration.

In an exam design, mention these operational considerations when recommending PQC; it shows you understand that security is not just algorithms, but lifecycle management and operational resilience.

11. PQC in Common Architectures: Examples You Might See on CAS-005

11.1 Web and API Security (TLS)

Consider a scenario where you secure customer-facing web apps and APIs:

- Short term: Use strong classical suites (e.g., TLS 1.2/1.3 with ECDHE, AES-256) and monitor standards for PQC-ready TLS profiles.

- Medium term: Deploy hybrid TLS with ECDHE + Kyber once your stacks support it, especially for high-value data flows.

- Long term: Transition to PQC-only suites when client support is widespread and classical algorithms are deprecated.

An exam PBQ might show a TLS configuration matrix and ask you to choose settings that balance interoperability with future quantum resilience—hybrid is often the best answer when available.

11.2 VPNs and Remote Access

IPsec VPNs and SSL VPNs currently rely on DH/ECDH for key exchange and RSA/ECDSA for authentication. Moving toward PQC may involve:

- Upgrading VPN stacks to support PQC-enabled handshakes (e.g., Kyber-based KEMs)

- Using hybrid key exchange modes during transition

- Deploying PQC-capable client software and gateways

CAS-005 might not ask you for specific PQC ciphers in IPsec, but you should explain why current key exchange mechanisms are quantum-vulnerable and propose a migration approach.

11.3 PKI, Certificates, and Code Signing

Public key infrastructure and code signing are especially sensitive to long-term quantum risk:

- Root and intermediate CAs may adopt PQC or hybrid certificates (e.g., ECDSA + Dilithium) to protect long-lived trust anchors.

- Code signing for critical firmware and software might move to stateless hash-based signatures like SPHINCS+ or stateful schemes under SP 800‑208 [4].

- Certificate transparency logs and validation tools must handle larger certificates and signatures.

On the exam, if you are asked to protect firmware against long-term tampering and future quantum threats, recommending hash-based or lattice-based PQC signatures with careful validation is a strong answer [1][4].

12. Governance, Policy, and PQC: What CAS-005 Wants You to Think About

PQC is not only a technical migration; it’s a governance and risk topic. NIST and other frameworks emphasize policies and risk management that adapt to emerging threats [3][4]. For CAS-005, consider:

- Policy updates: Crypto policies should mention quantum risk, preferred algorithms, and timelines for deprecating vulnerable schemes.

- Risk register entries: Document quantum risk explicitly for systems with long-lived data or critical functions.

- Vendor and third-party risk: Assess whether CSPs, PKI providers, and vendors have credible PQC roadmaps.

- Training and awareness: Ensure architects, developers, and operations teams understand PQC basics and migration implications.

These actions align with governance and risk management expectations in CAS-005 and with NIST CSF’s focus on adapting to new threats and technologies [2][3].

13. How to Study PQC Efficiently for CAS-005

You don’t need to become a cryptographer to score well on PQC topics in CAS-005. Focus your study on:

- Conceptual understanding

- Why RSA, DH, and ECC are vulnerable to quantum computers [1].

- What “post-quantum” means and the difference between PQC and quantum-key distribution.

- NIST PQC algorithms at a high level

- Names and roles: Kyber (KEM), Dilithium (signature), SPHINCS+ (stateless hash-based signature), XMSS/LMS (stateful hash-based signatures) [1][4].

- Basic trade-offs: size, performance, complexity.

- Architecture and migration

- Crypto agility and hybrid deployments.

- Prioritizing systems based on data sensitivity and lifespan.

- Impacts on PKI, VPNs, TLS, and key management.

- Practice with scenarios

- Explain to a non-crypto stakeholder why PQC matters.

- Design a high-level roadmap for a critical system.

- Identify weak points in a given architecture’s crypto choices.

Whenever you see a CAS-005 question about “future-proof,” “long-term confidentiality,” or “emerging cryptographic threats,” think about whether post-quantum cryptography is part of the expected answer.

14. Bringing It Together: PQC for the CAS-005 Exam and Your Career

Post-quantum cryptography is moving from theory to practice. NIST’s standards for Kyber, Dilithium, and SPHINCS+ mean that over the lifetime of your career, you will likely help design and implement PQC migrations [1][4]. CAS-005 is already preparing you for that by emphasizing crypto agility, long-term risk management, and architecture-level decision making [2][3].

If you can explain the quantum threat, name and categorize the main NIST PQC algorithms, outline a hybrid migration strategy, and reason about operational impacts, you’ll be in a strong position—both for the exam and for leading your organization’s crypto-modernization journey.

As you study, keep asking: “If this system needs to stay secure for the next 10–20 years, what does post-quantum cryptography change about my design?” That’s the mindset CAS-005 is testing—and the mindset practitioners need.

For broader exam prep, pair this article with resources on zero trust architecture and crypto-agile key management to build a holistic view of future-ready security design [2][3].

Bibliography

- National Institute of Standards and Technology. (2024, August 13). *Announcing approval of three Federal Information Processing Standards (FIPS) for post-quantum cryptography* [News release]. U.S. Department of Commerce. https://csrc.nist.gov/news/2024/postquantum-cryptography-fips-approved

- CompTIA. (2023). *CompTIA CASP+ (CAS-005) exam objectives* [Exam objectives]. CompTIA. https://www.comptia.org/certifications/casp

- National Institute of Standards and Technology. (2018). *Framework for improving critical infrastructure cybersecurity* (Version 1.1) [NIST Cybersecurity Framework]. U.S. Department of Commerce. https://doi.org/10.6028/NIST.CSWP.04162018

- National Institute of Standards and Technology. (2020). *Recommendation for stateful hash-based signature schemes* (NIST Special Publication 800-208). U.S. Department of Commerce. https://doi.org/10.6028/NIST.SP.800-208